Summarizing the evidence on AI and chicken consciousness

Rethink Priorities has recently come out with the Digital Consciousness Model, a groundbreaking synthesis of the evidence for and against the consciousness of large language models and chickens. I think the Digital Consciousness Model is some of the most exciting work coming out of consciousness studies recently, and I want to walk you through what we’ve learned from it.

I’m going to start by talking about the model’s findings. Then I’m going to get into the nitty-gritty details, which are kind of boring (I don’t want you guys to bounce off the post because you’re trying to understand Bayesian hierarchical models).

What We Learn From The Digital Consciousness Model

The Digital Consciousness Model doesn’t offer a new theory of consciousness or purport to say once and for all which entities are conscious. Instead, it organizes the information we already have about consciousness in a structured way that makes it easier for everyone to think more clearly about consciousness—regardless of their viewpoints about the matter.

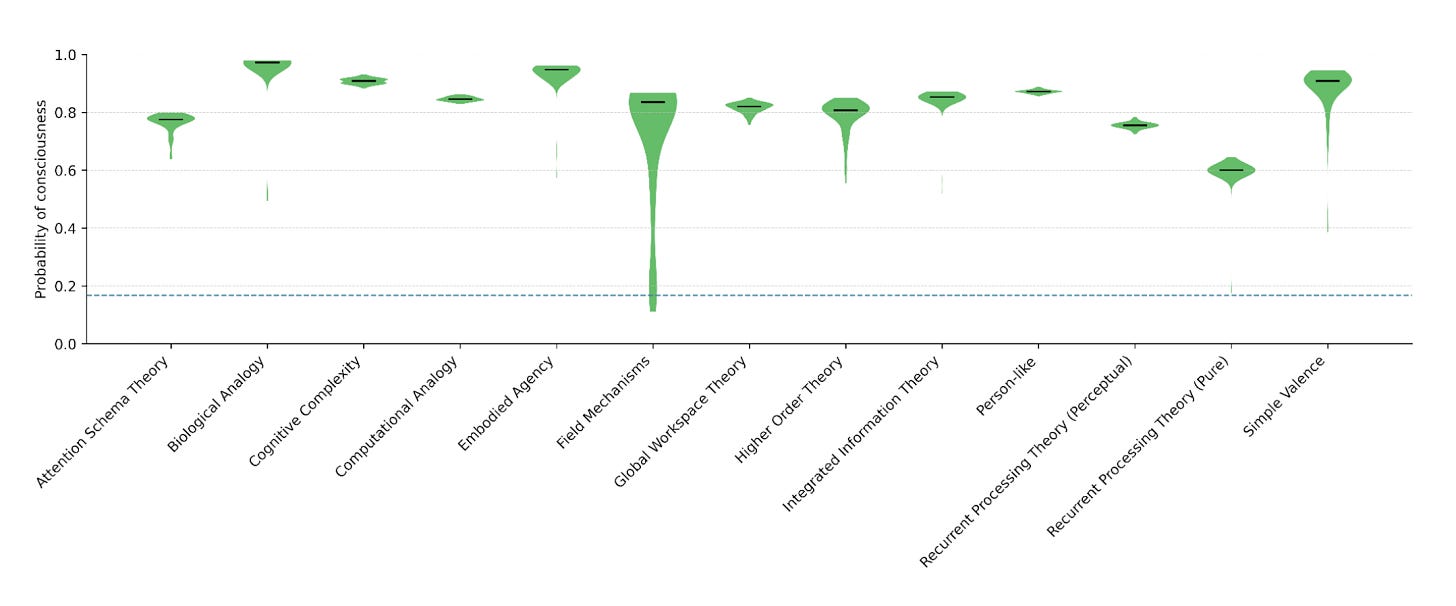

On a very high level, the Digital Consciousness Model looks at thirteen “stances”, or ideas about what evidence we should use to figure out if an entity is conscious. Some of the stances are philosophical or neuroscientific viewpoints, such as that consciousness comes from being able to reflect on your own thoughts and feelings, having positive and negative emotions, or having a body that you use to affect the world. Some of the stances are rough heuristics: an entity might be more likely to be conscious if it is biologically similar to humans, or seems “personlike”, or is very smart.

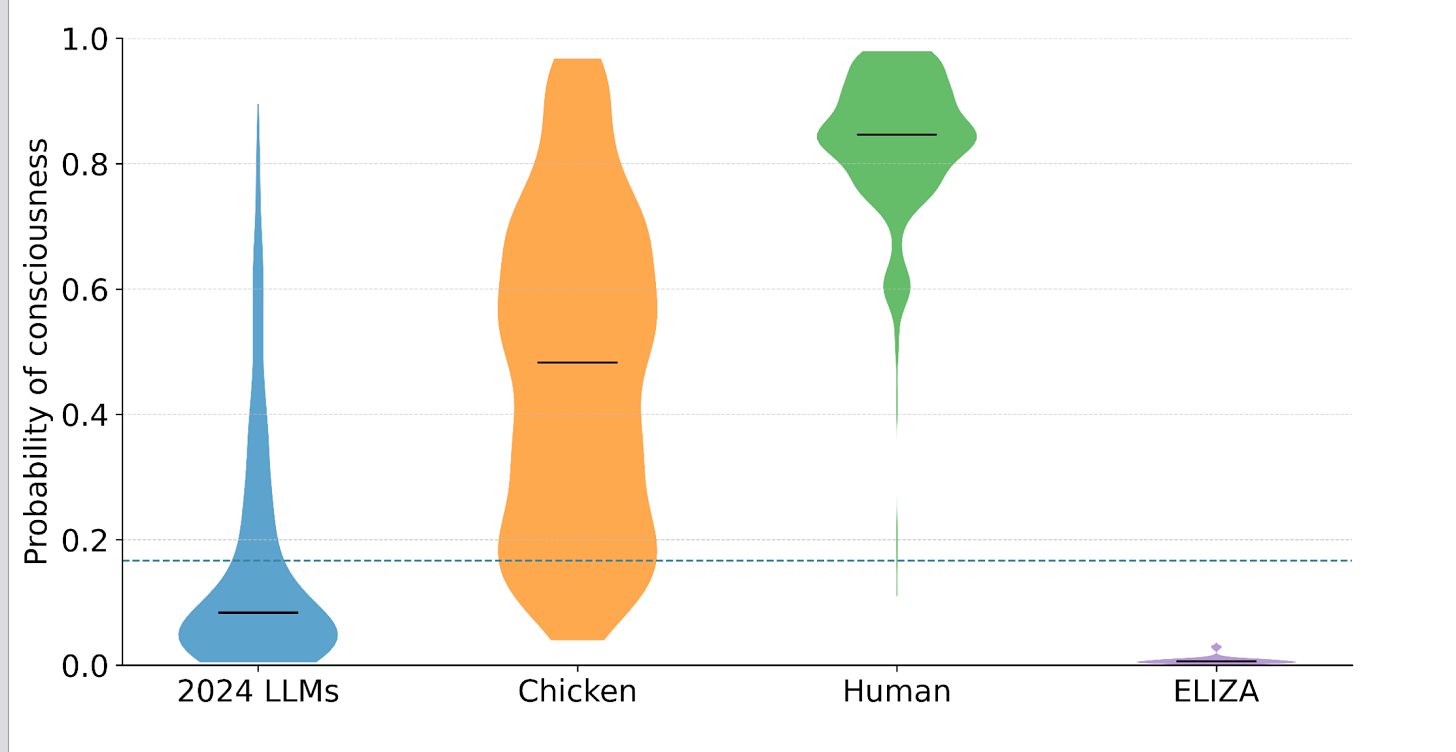

If you ascribe the same probability to each stance that consciousness experts do, you should conclude:

There is very strong evidence that humans are conscious (likelihood ratio: 28.33).1

There is strong evidence that chickens are conscious (likelihood ratio: 4.6).

There is evidence that 2024 LLMs2 are not conscious (likelihood ratio: 0.43).

There is very strong evidence that the chatbot ELIZA is not conscious (likelihood ratio: 0.05).

By looking at this picture, you can see that we’re pretty certain that humans are conscious and very certain that ELIZA is not conscious. We’re uncertain about whether chickens and 2024 LLMs are conscious. But we’re uncertain in different ways. We are pretty certain about how uncertain we are about whether 2024 LLMs are conscious (we think there’s about a 10% chance they’re conscious). But we’re not only uncertain about whether chickens are conscious, we’re very uncertain about how uncertain we are about whether chickens are conscious.

But the Digital Consciousness Model is useful in other ways too.

For example, what if you believe strongly in a particular stance? You can look at the Digital Consciousness Model and figure out how likely it is that an entity is conscious given your beliefs about consciousness.

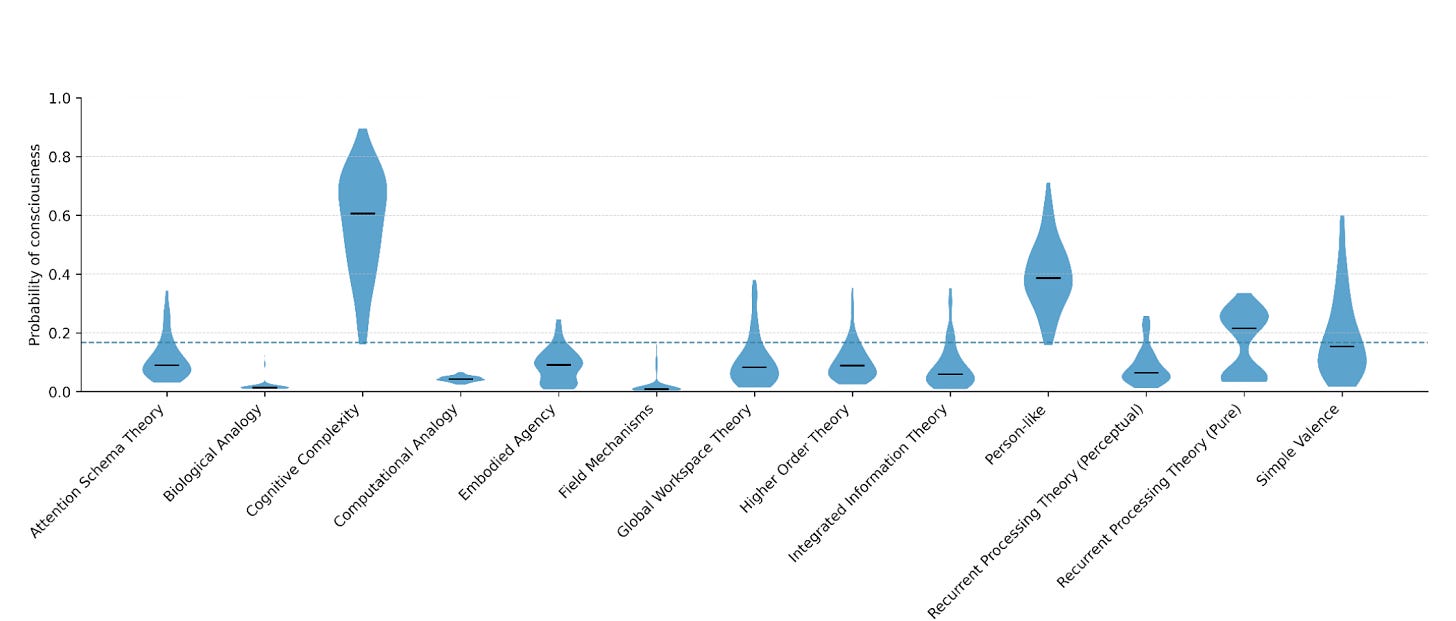

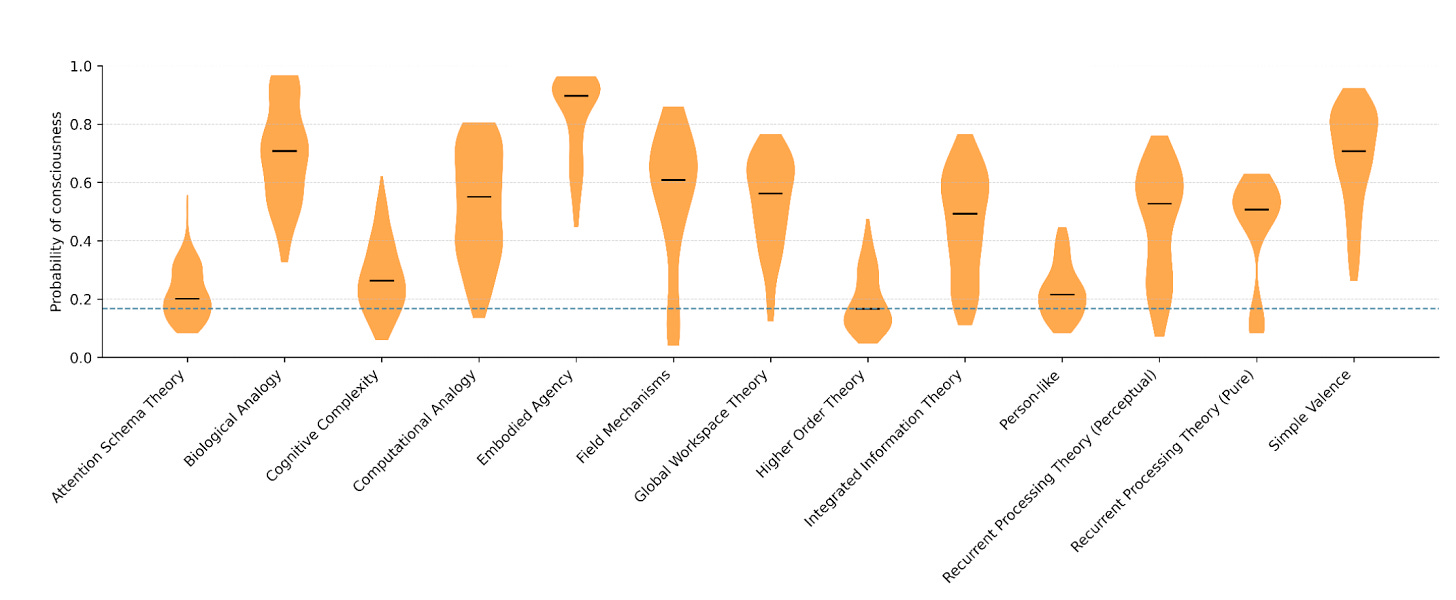

For example, 2024 LLMs do best on the stances cognitive complexity (“how rich, interconnected, and complicated is this entity’s thinking?”) and personlike (“does this entity seem like a person?”) They do worst on the stances embodied agency (“does this entity have a body it uses to interact in a complex way with the physical world?”) and biological analogy (“is this entity biologically similar to humans?”) Conversely, chickens do best on embodied agency and biological analogy. They do worst on personlike, higher order theory (“does this entity think about its own thoughts and feelings?”), and attention schema (“does this entity have a model of what it’s paying attention to at any time?”)

In an ideal world, you would be able to enter your own weight on various stances into an app and receive a customized set of likelihood ratios. WORK ON IT, RETHINK PRIORITIES. Until then, if you think multiple stances are plausible, you are just going to have to peer at charts until you have a vibe.

You might notice that the stances that 2024 LLMs do best on are the ones chickens do worst on, and vice versa. This suggests that people who believe chickens are conscious should be less likely to believe 2024 LLMs are conscious, and vice versa. The only person I know who actually acts like that is Eliezer Yudkowsky, who is concerned about LLMs but not chickens. Everyone else seems to be more likely to believe that 2024 LLMs are conscious if they believe chickens are conscious; about this, more later.

I have seen people say that the Digital Consciousness Model only gives an 80% chance that humans are conscious. This is a misinterpretation.

Pretend that you’re a Martian arriving on the planet Earth. Through a bizarre coincidence, you have exactly the same understanding of neuroscience, cognitive science, and philosophy of mind that humans do. However, you don’t know anything about the entities on Earth. You think about it, and you decide that, in your state of perfect ignorance, you put a 1 in 6 chance on any entity you encounter on Earth being conscious. (In Bayesian terms, this is your “prior.”)

You arrive on Earth and begin to collect information about how these Earth entities think. (In Bayesian terms, this is your “evidence.”) Afterward, you come to new conclusions about which entities are conscious. (In Bayesian terms, this is your “posterior.”)

The claim of the Digital Consciousness Model is that our example Martian should conclude there is an 80% chance humans are conscious. Obviously, your prior that humans are conscious is much higher than 1 in 6, because you’re a human and you’re conscious. Indeed, you don’t learn anything from the Digital Consciousness Model about the properties of human beings: you presumably already know that human beings are intelligent, are capable of self-modeling, have feelings, have bodies, and are biologically similar to human beings (...I sure hope).

However, the Digital Consciousness Model wanted to have all the probabilities be comparable. It would be confusing if, in addition to showing how convincing the evidence is in the abstract, the model accounted for the fact that we’re all very sure humans are conscious.

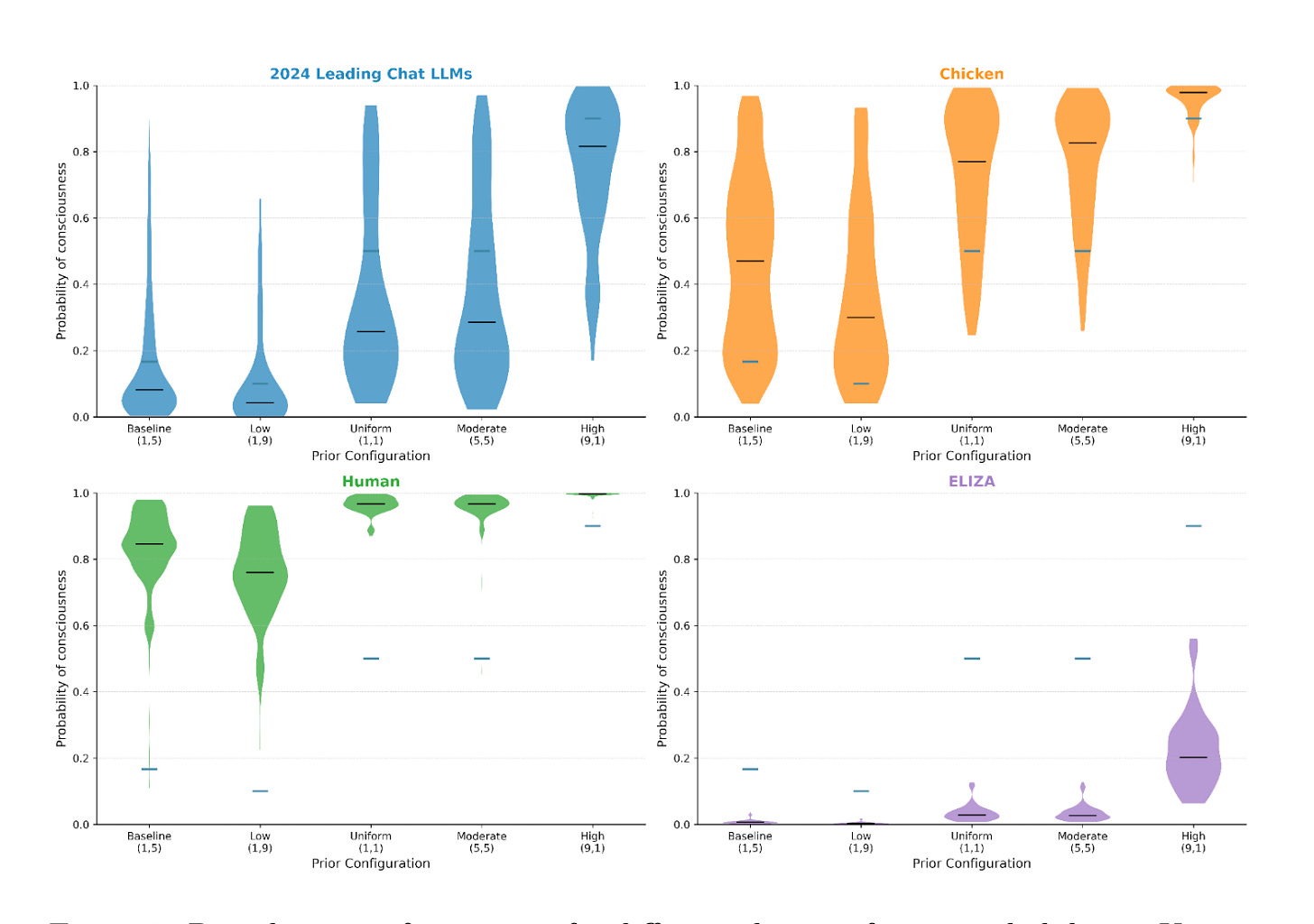

Indeed, the results of the Digital Consciousness Model are very sensitive to your prior:

Note that, if we’re being careful and rigorous here, your prior is not the probability you give right now that chickens or 2024 LLMs are conscious. You already know a lot of facts about chickens and 2024 LLMs—including a number of very basic facts which are included in the model, such as “LLMs can talk” and “chickens are animals.” If your prior is the probability you give right now that chickens are conscious, you’re going to be double-counting a lot of evidence. Instead, your prior is your probability that any given entity (that you’re bothering to ask this question about, so not a rock) is conscious.

Regardless of the prior, we conclude that humans are probably conscious and the therapy chatbot ELIZA is probably not conscious. But whether chickens or 2024 LLMs are conscious depends a lot on your prior. Since the evidence for chickens and 2024 LLMs isn’t overwhelming, your broader take about how likely it is that any entity is conscious matters a lot.

Similarly, we see that how certain we are about our uncertainty changes with the prior. You’re more uncertain about how uncertain you are about whether 2024 LLMs are conscious, if you start out believing that lots of beings are conscious. Similarly, if you start out believing that lots of beings are conscious, you’re really quite certain of chicken consciousness.

Probably differences in priors explain why people who believe chickens are conscious are more likely to believe 2024 LLMs are conscious: they’re more likely to believe anyone is conscious.

So why look at humans in the Digital Consciousness Model at all? Well, if the model found that humans were unlikely to be conscious, then it would be a very bad model; including humans is a sense check. And since we’re more certain that humans are conscious than we are of any particular theory of consciousness, we can reduce the probability we put on stances that are more likely to conclude that humans aren’t conscious:

Sorry, field mechanisms.

Now, we have some major caveats about the Digital Consciousness Model.

First, the Digital Consciousness Model represents all indicators and features as binary in order to make the model simpler: either you can do self-modeling or you can’t. In reality, entities can be more or less good at self-modeling, and entities that are better at self-modeling are more likely to be conscious. The Digital Consciousness Model does incorporate uncertainty, so this is only a problem if you think that we’re (say) very certain that chickens do only a little self-modeling. I think in general, the less an entity has a particular trait, the less certain we are that they have it at all, so I don’t view this as a serious concern.

Second, many indicators are very difficult to assess. For example, it is a complex question, widely disputed among the top LLM psychologists,3 whether LLMs can be said to “have” “preferences.” LLMs in particular are so goddamn weird that they challenge many of our basic concepts about how thought works. If you understand these concepts differently from how Rethink Priorities’ experts understood them, you will likely get different answers.

Third, “what is the probability that chickens maintain an internal representation of themselves different from how they represent other agents?” is a confusing and hard-to-answer question. I think even kind of fake probabilities can be helpful to clarify your thinking, but you shouldn’t take the results of the Digital Consciousness Model too literally.

Fourth, many stances were excluded. I think many of the excluded stances are pretty stupid (panpsychism, quantum mechanisms) or meaningless in a model of this kind (error theory), but if Your Favorite Stance was excluded the Digital Consciousness Model won’t be super helpful for you.

Fifth, the model only includes two entities that reasonable people are uncertain about (chickens and 2024 LLMs). I understand that Rethink Priorities intends to expand the model going forward to include a wider range of controversial entities, including 2026 frontier models, less humanlike AI systems such as AlphaFold or the Waymo Driver, and invertebrate species such as insects and nematodes. I eagerly anticipate their work!

How The Digital Consciousness Model Works

The Digital Consciousness Model is a Bayesian hierarchical model.

The model starts with a prior—an estimate of how conscious an entity is, before you learn anything about that entity. You then score the presence or absence of 206 traits called “indicators”. Indicators include:

Is the entity made of carbon?

Does the entity maintain an internal representation of itself that is different from how it represents other agents?

Can the entity solve abstract visual reasoning problems?

Each of the indicators provides evidence about whether the model has one or more of twenty features. Features include:

Is the entity biologically similar to humans? (Being carbon-based is an indicator of this feature.)

Does the entity model its own traits, predict its own behavior, and differentiate itself from others? (Maintaining a distinct self-representation is an indicator of this feature.)

Can an entity behave intelligently (solve novel problems, do abstract reasoning, etc.)? (Being able to solve abstract visual reasoning problems is an indicator of this feature.)

(The model actually goes indicator --> subfeature --> feature --> stance but I’m mostly skipping subfeatures to simplify the explanation.)

For each indicator, we ask:

If a system doesn’t have a feature, what is the probability that it has this indicator? This is called “demandingness.”

Very demanding indicators are rare and much more common if you have the feature, but might not exist even if an entity really has the feature.

In math terms, this is Specificity / (1-Specificity) (don’t worry if you don’t know what that means).

If a system has a feature, how much more likely is it to have the indicator compared to if it doesn’t have the feature? This is called “support.”

Indicators which strongly support a feature are much more common if an entity has the feature, but might also be really common in entities without the feature.

In math terms, this is Sensitivity / (1- Specificity) (again, don’t worry if you don’t know what that means).

In turn, each feature provides evidence for one or more of thirteen stances—perspectives on what gives us evidence for an entity being conscious. For example:

Recurrent processing (perceptual) holds that consciousness “arises when perceptual inputs are subject to iterative refinement through structured, feedback-driven loops” (pg. 52), similar to vision in the mammalian brain.

Biological similarity provides evidence under the recurrent processing (perceptual) stance (moderate support, weakly demanding).

Biological analogy holds that entities are more likely to be conscious if they’re biologically similar to humans.

As you might expect, biological similarity provides evidence under the biological analogy stance (overwhelming support, overwhelmingly demanding).

Intelligence provides evidence under the biological analogy stance (weak support, strongly undemanding).

Attention schema holds that entities are conscious if they have a model of what they’re paying attention to, which they use to deliberately control and direct their attention.

Self-modeling provides evidence under the attention schema stance (strong support, strongly demanding).

Intelligence provides evidence under the attention schema stance (weak support, moderately demanding).

Cognitive complexity holds that entities are more likely to be conscious if they think in a rich and complicated way.

Intelligence provides evidence under the cognitive complexity stance (moderate support, strongly demanding).

I am not A Statistics but I have done my best to explain what Bayesian hierarchical modeling is. All mistakes are my own. If you hate math, you can skip to the part where I say “math part over.”

For each link (indicator to feature, feature to stance), you have a probability distribution about how much evidence the former provides about the latter. (So you might think, based on the support and the demandingness, that the likelihood ratio is between 1 and 8.) For each indicator, you have a probability of whether the indicator exists. (So you might think it’s 60% likely the entity has the indicator.) You also have a probability distribution about what your prior is. (You might think it’s between 10% likely and 30% likely that the entity is conscious, before looking at any information.)

So you draw a bunch of random numbers and decide that in this run the entity has the indicator, the indicator-to-feature likelihood ratio is 2, the feature-to-stance likelihood ratio is 4, and your prior is 20%. Then, using Bayesian statistics, you go “the entity has this set of indicators, which makes me believe it is X much more likely to have this feature, which makes me believe it is Y more likely to be conscious according to this stance. Now I am going to combine all the stances together, weighted according to how plausible the experts thought they were. I have a final likelihood ratio, and I can combine that with my prior to find out my posterior.”

You then repeat that tens of thousands of times and average together all the posteriors you get. Eventually, new runs stop changing what the posterior value is. That’s your final posterior, and you can write your report.

Math part over.

Why would you do this silly thing? It allows you to incorporate all kinds of uncertainty. Not only are you uncertain about whether LLMs can do a particular thing, you’re uncertain about what that means for their broader psychology, and uncertain what details of their psychology means for whether they’re conscious under a particular stance on what consciousness means. Since everyone is in fact very uncertain about these things, the model allows us to quantify exactly how uncertain we are. From this, we can derive findings like “we are pretty certain of how uncertain we are about 2024 LLMs, but we are very uncertain of how uncertain we are about chickens.”

For a further explanation of why Bayesianism is useful, please see dynomight’s excellent ‘Bayes is not a phase’.

The likelihood ratio is the ratio of how likely the evidence is if the statement you’re wondering about is true to how likely the evidence is if the statement you’re wondering about is false.

I will insistently say ‘2024 LLMs’ because LLMs are improving rapidly over time and later models may be more likely to be conscious

anons on X

Dang, I really gotta get my robot book done before the scientists all solve "what is a person" in a less sexy format than I was going to.

The thesis of that book is basically, a person is capable of saying no to you. That is to say, it has its own agenda which it follows regardless of orders. It initiates plans on its own and has preferences it follows.

In the book Service Model (highly recommended!), the robot is quite emphatic that he's not a person and he seems a lot less personlike than most fictional robots. He *can't* come up with his own tasks to do. But he eventually develops the capacity for malicious compliance: he can choose to do his tasks his own *way,* which I feel does count as agency.

But I think agency is necessary and not sufficient 🤔 There's more that goes into a person than that.

When I think of “what kind of being deserves rights,” though, I think more of the capacity to suffer. If it can't suffer, does it exactly need rights?

And then I think of the horrific dystopia it would create if we told the makers of AI (some of whom very much do want to create a person) that it doesn't count if the robot can't suffer.

The thing is that a test ceases to be useful once it's used as a *goal,* like if you trained a cat to respond a certain way to mirrors, that's not the same as a cat having a self concept. And if people create AI purely to meet one of the standards we come up with for personhood, we may find it achieved it without the underpinning of interior reality the standard was supposed to measure. Like, we've solved “being able to pass for human in an ordinary conversation” but it turns out that that tells us less than you'd think about what's going on under the hood.

These thoughts are kind of a tangent, sorry. But thanks for writing this post to explain the basics.

It's interesting to think about what that prior over "humans are conscious" means. You can imagine thinking about:

(1) The probability you yourself are conscious, conditioning on the fact that you have access to direct evidence of your own consciousness.

(2) The probability other people are conscious, conditioning on the fact that you are a human and have access to direct evidence of your own consciousness.

(3) The probability that people are conscious, supposing you are an alien with direct evidence of your own consciousness, but with an alien biology which is sorta-kinda similar to a human.

(4) The probability that people are conscious, supposing you are an alien with direct evidence of your own consciousness, but with an alien biology which is very different from a human.

(5) The probability that people are conscious, supposing you are an alien (or AI) with a biology/design very different from humans, and/or without any access to evidence of your own consciousness.

Personally, I think I'd have to give (5) a much lower number than (1)...